Faculty Fellow

NYU

Hey! I am a Faculty Fellow at the NYU Center of Data Science. I earned my PhD from the Natural Language Processing Lab at Bar-Ilan University, supervised by Prof. Yoav Goldberg.

I am on the academic job market for 2026–2027.

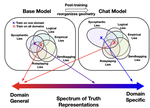

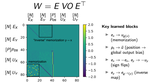

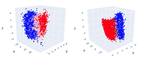

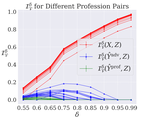

My research focuses on analyzing and controlling the internal representations of generative models, particularly language models. I study how neural networks encode structured information, use it to solve tasks, and represent interpretable concepts. I try—sometimes even successfully—to develop mathematically-principled approaches to interpretability. I am particularly interested in understanding how simple structures, such as concept-aligned linear subspaces, emerge as a byproduct of the language modeling objective, and how we can use such structures to steer and control models.

During my PhD, I’ve worked on techniques to selectively control information in neural representations, with some fun linguistic side tours. More recently, I’ve explored framing LMs as causal models and tackling questions of learnability in a controlled setting.

Feel free to reach out if you have questions about my work or if you’re interested in potential collaborations in these areas! You can also find my CV here.

Interests

- NLP

- Representation Learning

- Interpretability

Education

MSc in Computer Science

Bar Ilan University

BSc in Computer Science

Bar Ilan University

BSc in Chemistry

Bar Ilan University